More on the Wisdom of Krauts

The last post, "The Wisdom of Krauts," got sidetracked by reference to a conversation between Malcom Gladwell, author of Blink: The Power of Thinking Without Thinking and James Surowiecki, another New Yorker staff writer who wrote The Tipping Point, and more recently, The Wisdom of Crowds. I thought the exchange would provide a useful summary of the books, but it was a lot to read at one sitting.

As a result, I never quite got around to the point I was stalking. I'm pretty certain it was going to contrast ideas about crowds, charismatic leaders and drastically destructive decision making ( think Nazism) with other current popular thinking about the ways groups and experts can work quickly toward constructive decisions.

Thus, "The Wisdom of Krauts." I offer this explanation for those careful readers who actually expect the content of a post to relate to its header. I'm going to head in that direction once more.

If I led you off track, and you read the Gladwell/Surowiecki back and forth, be sure to read the comment by ShriekingViolet, who did a good job pointing out what the two authors failed to critique in each other's books. That is, there's a strong argument to be made for broadening information gathering, but that's not the same as crowds actually solving the problem. She points out that conscious expertise was still involved in many of the examples — in setting up the information-gathering framework, evaluating the results, or both. Likewise, organizations have long been aware of the need to screen out biases and combat group-think.

If you're not familiar with the two books, Surowiecki examines how aggregating a wide array of perspectives can result in better decisions, in part, by filtering out individual mistakes or blind spots. Gladwell focuses on how experts may quickly size up situations and arrive at better conclusions through "rapid cognition," compared to those who rely on exhaustive study. I've seen both methods work first hand many times in my work as a communicator and manager.

The group death march

A classic simulation called Desert Survival Situation™ has been used for decades in team-building. You are part of a group that has survived a crash landing in the Sonora Desert. The pilot and copilot are killed and plane is incinerated, but certain survival items remain. To succeed in the simulation, you decide individually, and then as a group, the optimum order of 15 survival items you'd want, along with certain actions to take.

Years ago, I participated in one of these simulations. The exercise was set up as a competition among five teams composed of managers from other Fortune 500 companies, including engineers, military veterans, sales managers, financial types and others regarded as future leaders in their companies. Because each group comprised people from different companies who were all essentially peers, there was no established hierarchy for sorting through the problem.

Our team "won" the simulation by making a series of decisions that would lead to survival. Although all teams made better collective decisions than members would have as individuals, all the other groups killed themselves, some surprisingly quickly. As we reviewed the results, a common theme emerged. In several of the dead teams, a strong "leader" emerged who led the team to make fatefully flawed choices, primarily by asserting expertise he didn't have or overriding contrary points of view. The element of competition — we all threw a dollar in a pot, to be split by the winning team — probably contributed to the outcome. We didn't just want to survive, we wanted to be proven right, superior to the other teams. (Also, looking back, it occurs to me the teams were exclusively male.)

The majority of these managers, presumably the semi-cream of the corporate crop, went to their simulated deaths by following an errant leader, losing sight of the real objective, or simply becoming dysfunctional.

Outsmarting yourself

For years, my marketing communications company has relied on a standard variety of selection tools for evaluating potential hires — not to mention 30+ years of experience-laden intuition. Methods include multiple interviews, portfolio reviews, official and unofficial reference checks, plus a personality assessment tool called Profiler that relates an individual's personal characteristics to the profiles of successful job holders. The insight gained from all these tools is supposed to help us make good hiring decisions.

It's interesting to look back at hiring mistakes and successes to see that my intuition was almost invariably correct — and so were the assessments! When mistakes were made, it was not so much one overriding the other, as it was my simple desire for resolution of my needs. At some point, I simply wanted the person to be right one, and I convinced myself they were — overwhelming both analysis and gut. I never, ever immediately wanted to hire the wrong person.

Yet, as Surowiecki writes, unconscious reactions can be "sabotaged by prejudice, stress, inexperience, and complexity, so that intuition ends up being worse, not better, than deliberation."

So what does any of this have to do with decision making in the public realm?

We have a leader who is both afflicted with extreme certitude and highly reliant upon his gut (perhaps with an assist from the Almighty's guiding hand). George W. Bush looked the former head of the KGB in the eye and immediately sensed Vladimir Putin was a man he could trust. He, and most of his organization charged with doing the standard analytical work, concluded that Saddam was a threat to unleash nukes — if not "imminently," then soon enough to force us to skip all the conventional niceties of diplomacy, sanctions and further inspections.

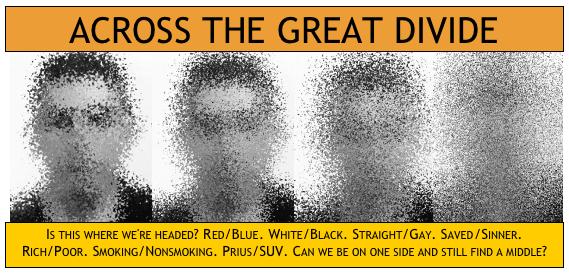

Political parties treat any contrary or nuanced position as apostacy and research the opposing side for positions that can be distorted and exploited, rather than searching for potential common ground.

Elected officials formulate policy on the basis of what they believe should be rather than what is, forming judgments and then going in seach for rationales, as in the quick decisions to invade Iraq, reform Social Security or teach "intelligent design" in science classes. Seizing WMDs becomes removing tyrants becomes installing democracy. Preventing a crisis becomes getting investors a better return becomes giving poor people a fair shake. God the Father creationism becomes a refutation of evolution becomes an alternative framework to natural selection. Whatever it takes to sell.

The national agenda is in the throes of rapid without the cognition. Of crowds without the wisdom. Of pattern seeking over pattern recognition.

Right now, our leaders are neither listening to authentic experts nor to the diverse collective. Like those simulated dead managers, they are focused on individual winning. If we want our society to survive, we should not follow them into the desert.

As a result, I never quite got around to the point I was stalking. I'm pretty certain it was going to contrast ideas about crowds, charismatic leaders and drastically destructive decision making ( think Nazism) with other current popular thinking about the ways groups and experts can work quickly toward constructive decisions.

Thus, "The Wisdom of Krauts." I offer this explanation for those careful readers who actually expect the content of a post to relate to its header. I'm going to head in that direction once more.

If I led you off track, and you read the Gladwell/Surowiecki back and forth, be sure to read the comment by ShriekingViolet, who did a good job pointing out what the two authors failed to critique in each other's books. That is, there's a strong argument to be made for broadening information gathering, but that's not the same as crowds actually solving the problem. She points out that conscious expertise was still involved in many of the examples — in setting up the information-gathering framework, evaluating the results, or both. Likewise, organizations have long been aware of the need to screen out biases and combat group-think.

If you're not familiar with the two books, Surowiecki examines how aggregating a wide array of perspectives can result in better decisions, in part, by filtering out individual mistakes or blind spots. Gladwell focuses on how experts may quickly size up situations and arrive at better conclusions through "rapid cognition," compared to those who rely on exhaustive study. I've seen both methods work first hand many times in my work as a communicator and manager.

The group death march

A classic simulation called Desert Survival Situation™ has been used for decades in team-building. You are part of a group that has survived a crash landing in the Sonora Desert. The pilot and copilot are killed and plane is incinerated, but certain survival items remain. To succeed in the simulation, you decide individually, and then as a group, the optimum order of 15 survival items you'd want, along with certain actions to take.

Years ago, I participated in one of these simulations. The exercise was set up as a competition among five teams composed of managers from other Fortune 500 companies, including engineers, military veterans, sales managers, financial types and others regarded as future leaders in their companies. Because each group comprised people from different companies who were all essentially peers, there was no established hierarchy for sorting through the problem.

Our team "won" the simulation by making a series of decisions that would lead to survival. Although all teams made better collective decisions than members would have as individuals, all the other groups killed themselves, some surprisingly quickly. As we reviewed the results, a common theme emerged. In several of the dead teams, a strong "leader" emerged who led the team to make fatefully flawed choices, primarily by asserting expertise he didn't have or overriding contrary points of view. The element of competition — we all threw a dollar in a pot, to be split by the winning team — probably contributed to the outcome. We didn't just want to survive, we wanted to be proven right, superior to the other teams. (Also, looking back, it occurs to me the teams were exclusively male.)

The majority of these managers, presumably the semi-cream of the corporate crop, went to their simulated deaths by following an errant leader, losing sight of the real objective, or simply becoming dysfunctional.

Outsmarting yourself

For years, my marketing communications company has relied on a standard variety of selection tools for evaluating potential hires — not to mention 30+ years of experience-laden intuition. Methods include multiple interviews, portfolio reviews, official and unofficial reference checks, plus a personality assessment tool called Profiler that relates an individual's personal characteristics to the profiles of successful job holders. The insight gained from all these tools is supposed to help us make good hiring decisions.

It's interesting to look back at hiring mistakes and successes to see that my intuition was almost invariably correct — and so were the assessments! When mistakes were made, it was not so much one overriding the other, as it was my simple desire for resolution of my needs. At some point, I simply wanted the person to be right one, and I convinced myself they were — overwhelming both analysis and gut. I never, ever immediately wanted to hire the wrong person.

Yet, as Surowiecki writes, unconscious reactions can be "sabotaged by prejudice, stress, inexperience, and complexity, so that intuition ends up being worse, not better, than deliberation."

So what does any of this have to do with decision making in the public realm?

We have a leader who is both afflicted with extreme certitude and highly reliant upon his gut (perhaps with an assist from the Almighty's guiding hand). George W. Bush looked the former head of the KGB in the eye and immediately sensed Vladimir Putin was a man he could trust. He, and most of his organization charged with doing the standard analytical work, concluded that Saddam was a threat to unleash nukes — if not "imminently," then soon enough to force us to skip all the conventional niceties of diplomacy, sanctions and further inspections.

Political parties treat any contrary or nuanced position as apostacy and research the opposing side for positions that can be distorted and exploited, rather than searching for potential common ground.

Elected officials formulate policy on the basis of what they believe should be rather than what is, forming judgments and then going in seach for rationales, as in the quick decisions to invade Iraq, reform Social Security or teach "intelligent design" in science classes. Seizing WMDs becomes removing tyrants becomes installing democracy. Preventing a crisis becomes getting investors a better return becomes giving poor people a fair shake. God the Father creationism becomes a refutation of evolution becomes an alternative framework to natural selection. Whatever it takes to sell.

The national agenda is in the throes of rapid without the cognition. Of crowds without the wisdom. Of pattern seeking over pattern recognition.

Right now, our leaders are neither listening to authentic experts nor to the diverse collective. Like those simulated dead managers, they are focused on individual winning. If we want our society to survive, we should not follow them into the desert.

2 Comments:

A simulation is not the same as a real life situation. Perhaps the strong, decisive types ended up "dead" because the outcomes were pre-ordained by the authors of the exercise. Consider the military near catastrophe at Omaha Beach on D-Day. How would that simulation be scripted by present day team builders? The fog of war--the fog of any rapidly changing human event is something best described by the "butterfly effect" theoreticians than by armchair corporate exercise designers. As one who makes his living stepping to the point and making critical decisions I appreciate the difficulties leaders face when confronted with limited information, time and resources. And I believe there are clearly times for collaborative discuassions about directions and also times when it's time to lead, follow or get out of the way.

True, simulations aren't the same as a real life situation, but they are effective learning aids for that very reason. A mistake isn't fatal. Airlines train pilots in simulators, so they don't crash $100-million aircraft. The military uses war games and simulations like this so soldiers can learn survival skills without killing themselves and their buddies. Businesses use them so executives don't bankrupt the company.

The effectiveness of the simulation I wrote about — along with others I've experienced — was not that it prepared me for a specific real-life event. The value came from seeing how I and others made decisions and interacted with other people under pressure.

The "armchair corporate exercise designers" didn't preordain the outcome of the exercise. It was based upon U.S. Air Force survival training and the realities of nature.

I don't think its design was biased against strong leaders. Teams could survive if one strong, decisive type made all the right decisions and people followed. They could also survive if the leader marshalled the group knowledge and then made the ultimate decisions. But this didn't happen with the groups I witnessed, so that was a real learning for me — especially since I had an abundance of self-confidence and a tendency to lead rather than follow.

Authority, confidence and decisiveness that apply in one environment don't necessarily apply in another. Being sure of yourself isn't the same as being right, and wise leader listens before he leads people into the desert.

Post a Comment

<< Home